Artificial Intelligence and Machine Learning

Generative Deep Learning for Multi-Scale, Multi-Wavelength Synthetic Solar Image Data

Principal Investigator:

Dr. Frederic Effenberger

Affiliation:

Ruhr-University Bochum, Germany

Local Project ID:

SunGANBoost

HPC Platform used:

JUWELS BOOSTER at JSC

Date published:

Project Summary

The SunGANBoost project developed advanced deep learning models capable of generating realistic synthetic solar images from multi-wavelength observations. By training large Generative Adversarial, Autoencoder and Diffusion models on data from NASA’s Solar Dynamics Observatory using the JUWELS Booster GPU supercomputer, the team achieved unprecedented fidelity in reproducing solar structures across wavelengths. The resulting models will support future solar physics research, helping scientists better understand and predict solar activity and its effects on space weather.

Project Report

The Challenge

The SunGANBoost project aimed to generate high-quality, synthetic solar images that mimic real observations across multiple wavelengths. Such models are crucial for advancing solar physics, enabling researchers to simulate solar activity, identify transient events, and test predictive algorithms. However, training generative deep learning models on high-resolution data presents a formidable computational challenge. Each image from NASA’s Solar Dynamics Observatory (SDO) has a resolution of up to 4096×4096 pixels and captures the solar atmosphere at ten different wavelengths. Processing and learning from such large and diverse datasets require enormous computational power and memory, far beyond what conventional computing systems can provide.

Why Supercomputing Was Required

The project relied on the JUWELS Booster GPU supercomputer at the Jülich Supercomputing Centre (JSC) to train and test complex neural networks. Each full-scale model run required tens of thousands of core hours. The team employed distributed training, gradient checkpointing, and advanced memory optimization techniques to process the data efficiently. These resources allowed to work directly at 1024×1024 resolution and explore even larger resolutions, ensuring that the generated images captured fine solar structures with high accuracy.

Findings and Results

The team developed a universal autoencoder capable of compressing high-resolution SDO data while maintaining high reconstruction quality. Building on this, they implemented diffusion-based generative models that operate in the latent space to generate synthetic solar images and short video sequences. These models successfully reproduced the spatial and temporal features of real SDO data, achieving strong performance metrics in structural similarity and image fidelity. The approach reduced computational costs compared to previous models while improving the realism of generated images. In addition, the model supports conditional generation—using one wavelength as input to predict others—opening new possibilities for solar image synthesis and data completion.

Impact and Beneficiaries

The project outcomes provide a foundation for new machine-learning tools in solar and space physics. Researchers can now generate realistic synthetic datasets for testing algorithms that detect solar flares, coronal mass ejections, and other transient events. These advancements support both academic research and operational forecasting of space weather, which affects satellite operations, communications, and power systems on Earth. The methods developed can also be adapted for other astrophysical and geophysical imaging applications where data are limited or costly to obtain. A Bachelor thesis, publications and several conference presentations have resulted from this work.

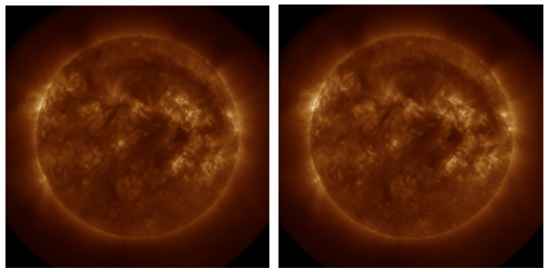

Examples of a real (left) and generated (right) synthetic solar image at 193Å, produced by the SunGANBoost diffusion model using multi-wavelength input data from SDO.

References

- Cherti, M., Czernik, A., Kesselheim, S., Effenberger, F., Jitsev, J., “A Comparative Study on Generative Models for High Resolution Solar Observation Imaging”, 2023, https://doi.org/10.48550/arXiv.2304.07169

- Effenberger, F., Vasile, R., Cherti, M., Kesselheim, S., Jitsev, J., “Generative Adversarial Deep Learning with Solar Images”, AGU Conference Presentation, Dec. 2021