ENVIRONMENT AND ENERGY

Buoyant-Convectively Driven Heat and Gas Exchange

Principal Investigator:

Herlina Herlina

Affiliation:

Institute for Hydromechanics, Karlsruhe Institute of Technology (KIT), Germany

Local Project ID:

pr28ca

HPC Platform used:

SuperMUC of LRZ

Date published:

Gas exchange across water surfaces receives increasing attention because of its importance to the global greenhouse budget. At present, most models used to estimate the gas flux only consider wind-shear. To improve the accuracy of the predictions a detailed study of buoyancy-driven gas transfer, which is a major contributor at low to moderate wind-speed, is necessary. The main challenge lies in resolving the extremely thin gas concentration boundary layer. To address this, direct numerical simulations (DNS) of gas transfer induced by surface-cooling were performed on SuperMUC using a numerical scheme that is capable of resolving the thin diffusive layers on a relatively coarse mesh while avoiding spurious oscillations of the scalar quantity.

Research Partner: Dr. Jan G. Wissink (Brunel University London)

Introduction

The study of heat and atmospheric gas exchange across the air-water interface received increasing interest in the last decades because of its important role in the global heat and green-house gas budget. Most estimations of the heat/gas flux only take wind-shear into account, which often results in an over- or under-prediction of the actual flux, particularly during low wind conditions. Equatorial ocean regions and sheltered lakes are examples of regions with typically low wind speed. Recent studies have reported that in such conditions buoyancy-driven flux cannot be neglected and may even dominate. Hence, to improve the accuracy of the prediction of heat and gas fluxes an in-depth understanding of the buoyant-convective mixing process in deep waters is required. To address this, a series of direct numerical simulations (DNS) of interfacial gas transfer driven by a surface-cooling-induced buoyant convective instability were performed [1, 2].

Results and Methods

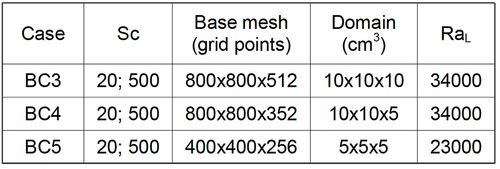

The DNS builds on earlier experiments performed at KIT. Compared to the size of the experimental domain (50x50x42cm3), only a small part adjacent to the water surface was modeled (Table 1). As in the experiments, the buoyant instability is caused by sudden cooling of the water surface. The initial conditions for the velocity and concentration fields were set to zero and the temperature field to T=1 (normalized so that T=0 and T=1 are the coldest temperature at the surface and the initial temperature of the warmer bulk water, respectively).

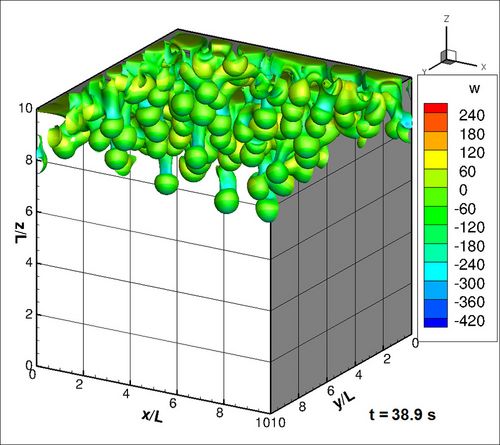

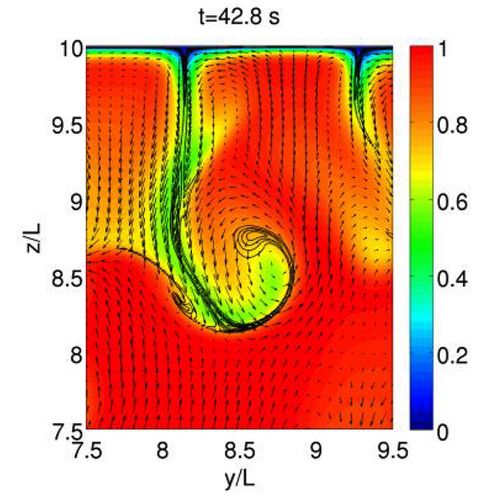

As a result, a thin thermal boundary layer of cool water formed adjacent to the air-water interface with an even thinner layer of gas-saturated water at the top. At t=9.6s, small random disturbances were added to the temperature field to trigger the instability resulting in the formation of thin falling sheets of cold water. Consequently, warmer water from the bulk starts to move upward resulting in the formation of convection cells. At intersections of three or more convection cells – where the added quantity of cold water falling down resulted in an increase in the local sink velocity – mushroom like plumes are formed (Figure 1). These cold sinking plumes transport high gas-saturated fluid deep into the bulk as shown in Figure 2.

Table 1: Overview of the simulations.

At the grid plane immediately beneath the surface a typical net-like pattern is visible which can be seen as the footprint of the convection cells in the water.

Figure 1: Isosurface of T=0.75 flooded with the vertical velocity that is normalized by U=κ / L=0.0158 mm/s, where κ is the thermal diffusivity of water at 298.15K.

Copyright: Institute for Hydromechanics, KITSnapshots of the net-like pattern show that in time the convection cells tend to grow. The high resolution of the present DNS allowed a quantitative prediction of the average convection cell-size near the surface LC. LC, together with the velocity fluctuations at the surface usurf, were found to be relevant choices for the length and velocity scales, respectively, to be used in the large-eddy model for the estimation of the transfer velocity KL.

As illustrated by the isolines for the scalar concentration with Schmidt number (Sc) = 500 (relevant for the transfer of oxygen in water) in Figure 2, the transfer of high Sc atmospheric gaseous into water is characterized by an extremely thin gas boundary layer and instantaneous occurrence of steep gradients in other regions. To accurately simulate this problem, we used a specifically designed numerical scheme capable of resolving the scalar field without having under- and/or over-shoots of the scalar quantity. In this code, the 5th-order WENO-scheme [3] for scalar convection, combined with a 4th-order central method for scalar diffusion, was implemented on a staggered and stretched mesh. In the simulations, up to five convection-diffusion equations for the scalar and temperature were solved simultaneously (further details in [2, 4]).

Figure 2: Correlation between temperature field (colour) and the Sc=500 scalar (isolines : C=0.1 to 0.6).

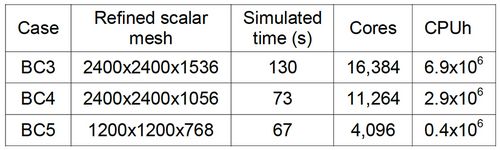

Copyright: Institute for Hydromechanics, KITTo optimize the load balancing, in each simulation the computational domain was divided into a number of blocks of equal size, each of which was assigned to its own processing core (Table 2). The standard Message-Passing-Interface (MPI) was used for the exchange of data between processes. MPI-I/O routines were used for the input and output of the large amount of data that needs to be periodically stored to be able to continue runs. Memory usage was minimized by allowing certain scalars to be solved on the coarse mesh while others were solved on the refined mesh.

As implied above, to resolve high Sc transport processes, a very fine mesh for the scalar is needed. Because of the special numerical scheme, the usage of a refinement factor of 3 for the Sc=500 scalar field was found to be sufficient in the present runs. The required computing resources were still significant but feasible (cf. Table 2). Each simulation typically generated twelve files. The overall storage needed (including the on-going large scale simulation described below) is about 19TB.

Table 2: Refined mesh size and used resources.

On-going Research/Outlook

Leveraging SuperMUC allowed us to perform very large scale, highly accurate numerical simulations of gas transfer across the air-water interface at high Schmidt numbers. The installation of phase 2 made it possible to further increase the resolution in our simulations so that we are currently able to run simulations at higher turbulent Reynolds numbers (RT). These DNS calculations, to our knowledge, are the first that allow a detailed study of the gas transfer driven by high intensity turbulence diffusing from below (RT up to 2000). Such highly turbulent simulations are crucial to study the role of small vortical structures in the gas transfer mechanism.

In the simulations described above, idealized conditions without surface contamination (free-slip boundary condition) were used. In reality, water surfaces are rarely clean. It is therefore our aim to perform further DNS computations of gas transfer by adding complexities as occurring in nature such as surface contaminations and also the combined effect of buoyancy-induced and isotropic-turbulence-induced flow.

References and Links

[1] http://www.ifh.kit.edu/26_1776.php

[2] Wissink, J.G. and Herlina, H. 2016. J. Fluid Mech. 787, 508-540.

[3] Liu X., Osher S., Chan T. 1994. J. Comput. Phys. 115, 200–212.

[4] Kubrak B., Herlina H., Greve F., Wissink J.G. 2013. J. Comput. Phys. 240, 158-173.

Scientific Contact:

Dr.-Ing. H. Herlina

Institute for Hydromechanics

Group: Computational Fluid Dynamics

Karlsruhe Institute of Technology (KIT)

D-76131 Karlsruhe (Germany)

e-mail: herlina [at] kit.edu